I recently finished watching “Westworld”, an HBO television series based on the 1973 movie with the same title. Both were the story of a theme park filled with animatronic characters who interacted with the guests. The characters, called “hosts” were so advanced it was practically impossible to tell them from the human being “guests”. Without going too far into the plot line, this, in my opinion was an extraordinarily well-done science fiction story which seemed to be far too close to reality for comfort.

Sentience

The capacity to feel, perceive, or experience subjectively. It is the ability to feel (sentience) distinguished from the ability to think (reason)

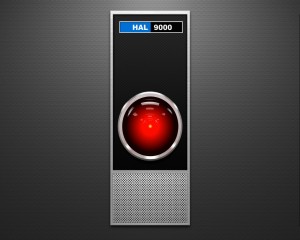

Westworld isn’t the first movie to explore the notion of artificial inteligence running amuck.It started with Hal 9000, the infamously intelligent computer in Stanley Kubrick’s 2001 A Space Odessy (1968).

“I’m sorry Dave, I’m afraid I can’t do that…”

Since then there have been countless movies about computers and/or robots turning on humans. In some cases the robots were monstrous and evil (Terminator). There were action/adventure robots (I,Robot), creepy (Ex Machina), and the franky disturbing A.I. Artificial Intellgence, by Steven Spielberg.

Artificial Intelligence

The standard definition of artificial intelligence (AI) is intelligence exhibited by machines. Generally, this is thought of as machines that imitate human cognative functions, such as problem solving.

The incredible recent advances have made AI an everyday experience. For example, search for something on Google. As you type in a few letters, suggestions begin to appear, as though Google is attempting to read your mind, and find out what you are looking for before you finish typing it. This is the common use of an algorithm, a self-contained sequence of actions to be performed. In the Google search (predictive text or autocomplete), to fill in your search, Google analyizes the last 10,000 searches in your geographical area, your bookmarks, your recent searches, your web browsing history, and the patterns of your browsing and searches. In other words, Google carefully looks at your behaviors as you fill in that search box, returning suggestions before you can even type them. Scary? It probably should be, but we’ve grown so accustomed to it, we really don’t even think about it. This is artificial intelligence from a machine, or in this case software, solving problems for you.

Google searches are of course, commonplace today. We accept them as part of our normal lives. How about Siri or Alexa? We ask them questions, they give us answers. We’ve adapted to speaking to our phones and computer systems, or is it speaking “with”? When does that interaction between man and machine begin to get muddy? — meet Samantha:

So when does the computer beome “real” ? When is it more than a machine?

British code breaker and inventor of the Enigma machine Alan Turing proposed a test (now known as the Turing Test), which suggests that if a person communicates with a machine, and cannot tell if the communication is from another person or a machine, the test has been passed. To paraphase a line from Westworld when a “host” is asked if they are human or machine, the host replied, “If you can’t tell , what difference does it make”?

So, could computers and artificlal intelligence become self-aware? Could they become sentient? Far fetched, perhaps, but some pretty smart folks have some qualms.

Stephen Hawking, the British physicist often referred to as one of the smartest people in the world, told the BBC “The development of full artificial intelligence could spell the end of the human race. It would take off on its own, and re-design itself at an ever increasing rate,” he said. “Humans, who are limited by slow biological evolution, couldn’t compete, and would be superseded.”(1)

Bill Gates seems to agree: “I am in the camp that is concerned about super intelligence,” Gates wrote. “First the machines will do a lot of jobs for us and not be super intelligent. That should be positive if we manage it well. A few decades after that though the intelligence is strong enough to be a concern. I agree with Elon Musk and some others on this and don’t understand why some people are not concerned.”(2)

Tessla founder Elon Musk seems to suggest the same thing: “I think we should be very careful about artificial intelligence. If I were to guess like what our biggest existential threat is, it’s probably that. So we need to be very careful with the artificial intelligence. Increasingly scientists think there should be some regulatory oversight maybe at the national and international level, just to make sure that we don’t do something very foolish. With artificial intelligence we are summoning the demon. In all those stories where there’s the guy with the pentagram and the holy water, it’s like yeah he’s sure he can control the demon. Didn’t work out.” (2)

The question seems to be whether or not machines with AI can become conscious, or self-aware. Watch these tiny robots take a test:

“…It may seem pretty simple, but for robots, this is one of the hardest tests out there. It not only requires the AI to be able to listen to and understand a question, but also to hear its own voice and recognise that it’s distinct from the other robots. And then it needs to link that realisation back to the original question to come up with an answer.”

To find out how this little robot became self-aware, click link below:

Robot passes self-awareness test

Technological Singularity

This is the creation of an artifical superintelligence, one so sophisticated that it could become runaway, causing it’s own “intelligence explosion”, out of the control of it’s makers. The argument is that it is possible to build a machine that is more intelligent than man, and this machine begins to rebuild itself, literally writing it’s own software, growing more and more intelligent as it goes. A concept known as Moore’s law suggests that this is not only possible, but plausible and even likely over time.

Is this real, or just the stuff of vivid imaginations and screenwriters? Several of the people mentioned above are part of the Future of Humanity Institute, which seems to take these things seriously.

So maybe humans will be ruled by machines sometime in the future. Or maybe it’s just fun science fiction. Which brings us back to Hal:

Endnotes

1.Beware the Robots, Says Stephen Hawking

2. Bill Gates on Dangers of Artificial Intelligence — Washington Post

Future of Life Institute — Wikipedia

Artificial Intelligence — Wikipedia